A Sensitivity Analysis Toolkit for Mitigation of Distributed Generation Voltage Rise

Fabian Tamp

October 2012

October 2012

This isn't your average problem - you can't just fix it by buying a better generator. You need COORDINATION

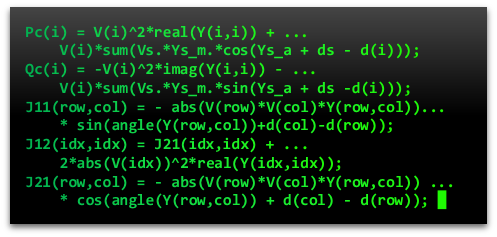

So, I built a toolkit based on SENSITIVITY ANALYSIS, A magical technique that makes understanding and working with network voltage relationships much simpler.

|

Simulation

|

|

Sensitivity Analysis

|

I calculated the sensitivities in a simulation with a trick called PERTURB-AND-OBSERVE, Which basically just means DROP A GENERATOR ON THE NETWORK AND SEE WHAT HAPPENS

There's no point in having this information unless we use it for decision-making, so I used the sensitivity analysis toolkit to implement a technique called

VAR CONTROL

On a model of a network in Western Sydney.

Doing the coordination was really easy because sensitivity analysis makes it really obvious where you get the most 'bang for your buck'.

Using this strategy, I could fix around 90% of the voltage rise. Hooray!

Before |

After |

Then, I tried to find network sensitivities from actual network data. That would be awesome, because you could make

INFORMED DECISIONS WITHOUT SIMULATIONS.

But, nobody had the network load data I needed... so I faked... erm, synthesised the data, using the

MIGHTY POWER OF STATISTICS!!!

Here's a visualisation of the synthesised network load data and the resultant voltages. Colour represents voltage, and blobs represents load size. Pretty!

Here's a visualisation of the synthesised network load data and the resultant voltages. Colour represents voltage, and blobs represents load size. Pretty!

To get the sensitivities, I thought it best to look for situations where perturb-and-observe has occurred naturally. The thing is, you need

A LOT OF DATA

before that happens coincidentally, and with a lot of data comes

A LOT OF PROBLEMS.

For one days' data, a test algorithm took 15 minutes to run. Two days' data took an hour. A weeks' data took 12 hours. A year's data was going to take 3.8 years to process. I'll leave that task for someone else.

For one days' data, a test algorithm took 15 minutes to run. Two days' data took an hour. A weeks' data took 12 hours. A year's data was going to take 3.8 years to process. I'll leave that task for someone else.